Your AI coding agent doesn't know how we do things here.

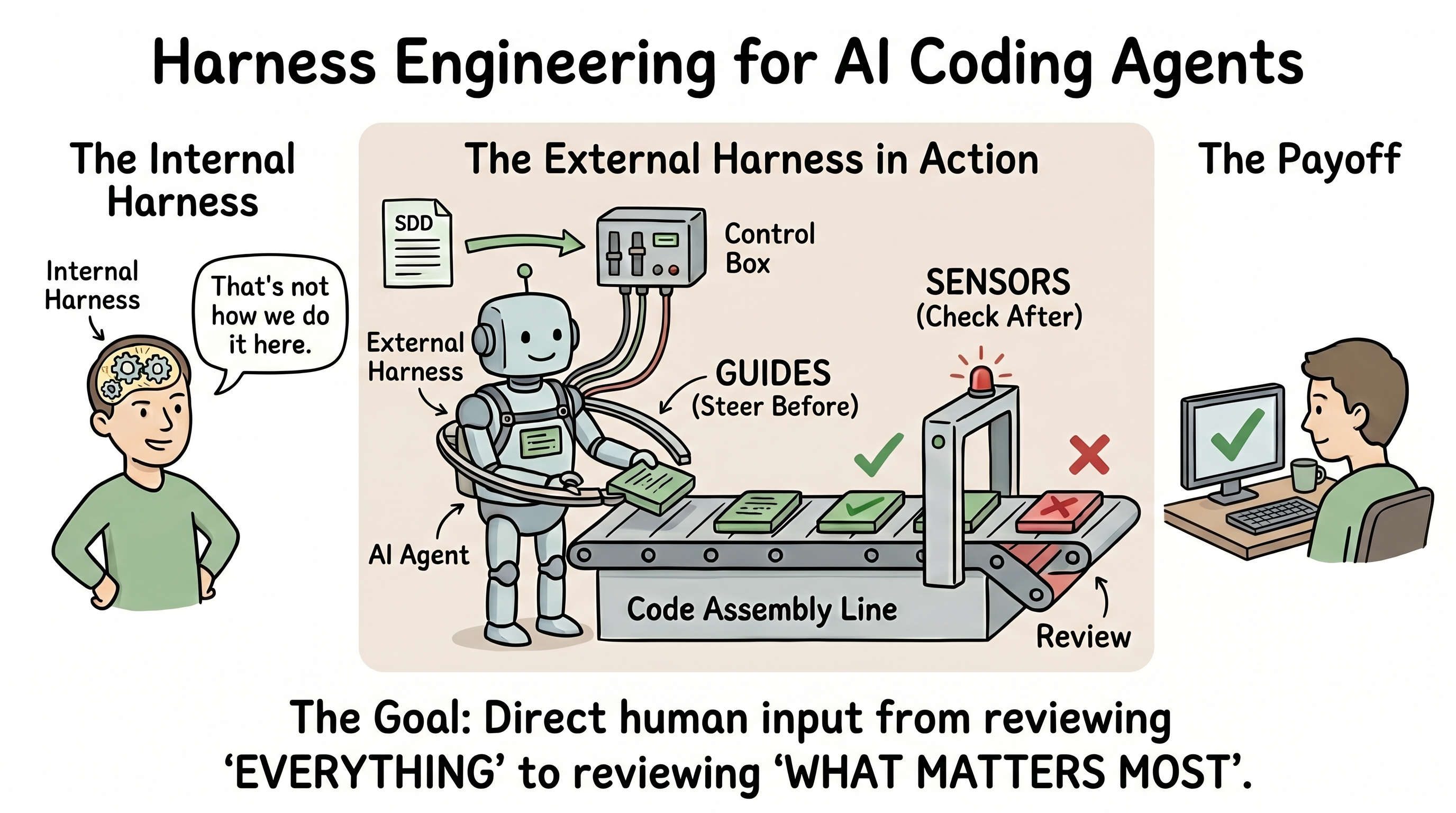

An experienced developer carries an implicit harness: intuition for complexity, a sense of that's not how we do it here. A coding agent has none of that. It will confidently write code that works — and violates everything we care about.

The answer isn't to distrust the agent. It's to build the harness externally.

What this means for SPARK

SDD is a guide. Its absence is what produces fast garbage: code that generates quickly but drifts from architectural intent, accumulates debt invisibly, becomes hard to hand over.

Once a developer hands off a task to an agent, the harness becomes the quality mechanism — not just human review at the end. Champions as harness engineers, not just adoption advocates.

Where we're not there yet

Behavioural harnesses (functional correctness) remain the least mature category — we can't yet reliably verify AI-generated code does what it's supposed to beyond the tests the agent also wrote. Worth tracking.

Also worth asking: if no sensors fire, is quality high or is the harness inadequate? Same question applies to our SDD outputs.

What's worth doing now

- Define guide templates per service topology — reusable patterns teams pick up, not reinvent

- Treat linter and test coverage as SDD completion criteria, not optional polish

- Track first-pass acceptance rates on agent-generated code — the leading indicator the harness is working

15 minutes well spent: Harness Engineering for Coding Agent Users — Martin Fowler